|

The Internet is full of ‘wicked problems’ and the latest cyberattack – the WannaCry ransomware – is no different. WannaCry has so far infected more than 200,000 computers in 150 countries, with the software demanding ransom payments in Bitcoin in 28 languages.

In a sense, WannaCry could be characterized as a ‘wicked problem’: so far, we have incomplete knowledge about the exact parameters of the attack and there is great uncertainty regarding the number of people actually involved. At the same time, Microsoft and the institutions hit by the attack have all suffered a large economic burden, while the interconnected nature of the attack has created significant problems and has had a spill over effect on the operation of various services, including, most notably, hospitals, transportation and telecommunication providers. Notwithstanding these features though, this specific cyberattack is not your typical ‘wicked problem’. Although it will be hard to fully solve, we will still be able to solve it in the end. This makes it less of a “wicked problem” and more of just another security problem. What is important though is what this attack tells us: the real “wicked problems” of the Internet is currently security (in a more general sense). Discussions about Internet security have consistently been rampant though more lately. Most such discussions, however, focus more on the policy agendas of nation states than the concept of Internet security itself. Often, this takes the form of giving high priority and equating security with issues like human rights, economics, social injustice and the threat of using the Internet to carry out military threats. Such thinking is usually buttressed with a combination of normative arguments about which values of which people should be protected, and empirical arguments as to the nature and magnitude of threats to those values. In our effort to understand – and, thus, attempt to resolve – security questions in the Internet, we face a significant limitation: we may know we have an overall security problem but we continue to fail to fully understand its scope, parameters and dimensions. And, with no definitive problem, getting a definitive solution becomes somewhat an impossible task. To this end, in order to understand the Internet security conundrum, we need to understand the complexity in approaching ‘wicked problems’. Academic Tim Curtis says that a “wicked problem” is one in which “the various stakeholders can barely agree on what the definition of the problem should be, let alone what the solution is”. Sounds familiar? In addressing security questions on the Internet, the different stakeholders are normally in full disagreement of the exact problem: governments tend to approach security as a national policy issue; businesses see it as purely economic; for the technical community it is usually a question about the reliability and resiliency of the network; and, civil society sees the whole issue under human rights considerations. This inability for agreement between affected and interested parties feeds into the mistaken perception that the security issue is somewhat broken. And, this creates the danger of security as a “wicked problems” to exist in perpetuity. As Curtis accurately argues: “Problems are intrinsically wicked or messy, and it is very dangerous for them to be treated as if they were ‘tame’ or ‘benign’. Real world problems have no definitive formulation; no point at which it is definitely solved; solutions are not true or false; there is no test for a solution; every solution contributes to a further social problem; there are no well-defined set of solutions; wicked problems are unique; they are symptomatic of other problems; they do not have simple causes; and have numerous possible explanations which in turn frame different policy responses; and, in particular, the social enterprise is not allowed to fail in their attempts to solve wicked problems.” Perhaps you are beginning to see what I mean. The crux is what is preventing us from finding solutions to the security challenges – whether they relate to ransomware attacks, attacks on national security or attacks directly against the network. So, we need to find a middle ground that will allow solutions for such ‘wicked problems’ to emerge. And, this middle ground is collaboration. Lately, I have been thinking quite a lot about collaboration – the value it adds, the importance it carries and its ability to solve ‘wicked problems’. Along with Leslie Daigle and Phil Roberts, we have deliberated how collaboration can contribute towards providing a robust framework where solutions can emerge and answers can be found. So, we came up with the following features that can make collaboration work.

Whether this understanding of collaboration can solve all security problems, I do not know. What I know though is that it is a pretty good starting point. In fact, it is the only starting point. Governments need to disclose system security vulnerabilities as they discover them, businesses and the technical community must race to address them and users must demand that this is the case. This will only happen though if different actors talk to each other. Note: Extracts taken from: Tim Curtis's essay The challenge and risks of innovation in social enterprises in Robert Gunn and Christopher Durkin's book Social Entrepreneurship: A skills approach. Photo: Flickr, "Opportunity Knocks" by The Shifted Librarian On Friday, September 30, 2016 - at the stroke of midnight - the IANA functions contract between the US government and ICANN ended quietly. This marked the end of an era, full of political struggles concerning the role of the US government over the Domain Name System (DNS). It also marked the beginning of a new one, full of opportunities and hope.

The termination of oversight over the IANA functions is more symbolic than anything. On October 1st, the US government did not turn off any Internet switch nor did it pass the keys of the Internet to ICANN. The US government was never holding such power to begin with. In a nutshell, what took place with the decision of the NTIA to allow the IANA contract to expire was the validation of the multistakeholder model of Internet governance. Historically, the multistakeholder model – the collaborative approach to dealing with Internet (policy and technical) issues – has not had an easy ride. Its efficiency and legitimacy to provide tangible and implementable policy recommendations has been questioned and it has often been characterized as an unworkable approach. Ever since it emerged during the second phase of the World Summit on Information Society (WSIS) in Tunis in 2005, multi stakeholder governance has been criticized for its lack of focus and for failing to identify the roles and responsibilities of its participating actors, especially governments. But, multistakeholderism has persisted and it seems that it now has scored its first big win. But, let’s be fair, multistakeholderism is a very awkward term. It is an invention that not everyone can easily relate to; it is so open-ended that it has been taken to mean a bunch of different things to a bunch of different people and institutions. But, if we forget the term for a minute, this invention is what has allowed non-state actors to be active participants in discussions that directly affect them. It has allowed collaboration to be front and center in preserving the Internet, addressing its challenges and finally being able to ensure its constant growth. Multistakeholderism is about collaboration and this is how we should view it. It is this collaborative model that has allowed us to address the various challenges over the past years, including the IANA transition. And, it is this collaboration that must carry us in the future. The successful transition of the IANA functions provides us with a solid framework to do so. So, where should we see its impact? The immediate impact should be seen in the debate over "enhanced cooperation", which was originally part of a compromise on the future of the Internet at the WSIS in 2005. At the time, agreement could not be reached over the governance of critical Internet resources, including the DNS. Enhanced cooperation was seen as the focal point where stakeholders would collaborate towards a more participatory governance structure. And, for years now, the Commission on Science and Technology for Development (CSTD) has been trapped in a never-ending argument that consistently seemed to circle back to the unresolved issue of the US government’s role over the DNS. With IANA out of the way, this argument is no longer persistent. This provides the CSTD and its participating actors with a unique opportunity to advance their thinking. The CSTD working group has the opportunity to find a new identity and make a whole new contribution to Internet governance. Rejuvenating the discussions at the CSTD level could help rejuvenate the discussions also in other fora, UN and non-UN. More fundamentally, however, the impact of the IANA experience should be be visible in the years to come and as we seek to find solutions to complex questions. One of the key takeaways of this this two-year plus process is the tools that we now have at our disposal -- tools that were always there but right now should be visible to all of us.

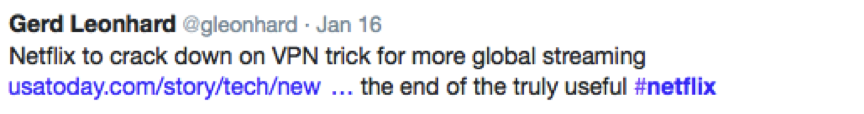

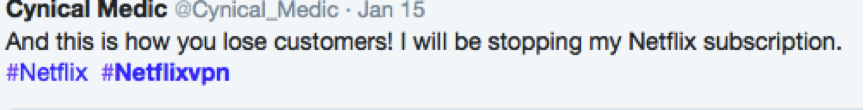

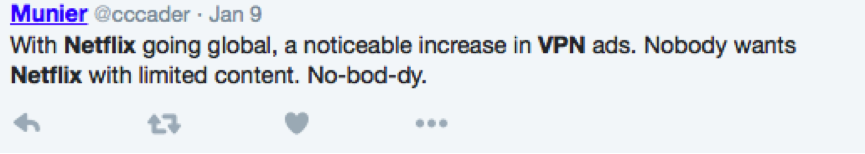

These are some of the obvious impressions the IANA transition process has to offer; of course, there are more. In moving forward, we should celebrate this important milestone the community has reached. But, we should also cease this opportunity to continue building a governance framework that allows people to come together and collaborate for a more inclusive Internet. Has Netflix made its first mistake yet by betraying its growing network of loyal customers? In a statement, titled “Evolving Proxy Detection as a Global Service”, Netflix Vice President David Fullagar said that he wants to prevent its subscribers from using Internet proxies or hide behind Virtual Private Networks (VPNs) to access content outside their own countries. “[…] in coming weeks, those using proxies and unblockers will only be able to access the service in the country where they currently are. We are confident this change won’t impact members not using proxies.” We can only make assumptions as to why Netflix has decided to do this. What is certain though is that, for many, this move will definitely change the perception they have on Netflix. A perception of a new business model that not only has depended on the Internet as a new business model, but has also respected its ability to offer users a variety of options. We need to acknowledge of course that Netflix has not had an easy ride. It is somewhat a common secret that Netflix has faced a big challenge to license or keep licensed content for its users. As the company grew, so did the costs associated with licensing content. In 2011, analysts estimated that Netflix spent $700 million for content licensing, which was expected to go up to $1.2 billion in 2012. This is a lot of money. No, wonder Netflix has consistently over the past few years tried to rely less and less on the content of others and has focused on creating its own original one. Shows like the House of Cards or Orange is the New Black have been hugely successful for the streaming giant and have even been part of the awards glitz and glam, but are still not enough to keep Netflix in the content competitive race. And, in August of last year, Netflix’s deal with Epix – the distributor of Hollywood blockbuster like the “Hunger Games” and Transformers ended, which automatically made the company’s share tumble for a little while. So, the hard truth is that Netflix needs Hollywood more than Hollywood needs Netflix. But, what Netflix needs even more than Hollywood is the trust of its customers. And, their latest move seems to be turning its customers away from the company in a time when Netflix needs them most. As the company prepares for a huge expansion in more than 130 countries, one cannot help but wonder whether the streaming giant will appeal to users in countries with a confined catalogue. As soon as news that Netflix would ban users from using VPNs, the Twitter-sphere want on fire. Here is a selection: In the Internet, trust is as important as water is to human life. Unless users are able to trust the provider, the services and their delivery, then they look for something else. In this sense, trustworthiness and trustability walk hand-in hand. In a Harvard Business Law Review article examining “Trust in the Age of Transparency”, the authors make it clear that “[for a company to succeed] beyond trustworthiness, you must achieve “trustability.” It’s a more proactive stance that has you not just keeping up your end of a bargain but ensuring that the bargain is the best one from the customers’ point of view […]”. Not allowing users innovative ways, like using Internet proxies, to access your content is certainly not what is best for Netflix’s customer base.

Netflix appears as if it is turning its back to its users. But, to what end? Netflix could easily use the fact that users opt for VPN to demonstrate that the current licensing regime is simply not working. It could use this data to demonstrate that in a global place like the Internet, we need to work towards identifying a more efficient, cost-effective and reliable way to license content. Instead, it opted for the ‘easy’ solution. We will just have to wait and see whether in the end Netflix will lose customers. But, notwithstanding this, Neflix will never be for its users what it was when it started. We live in a world of information abundance and the proliferation of ideas. Through mobile devices, tablets, laptops and computers we can access and create any sort of data in a ubiquitous way. But, it was not always like that. Before the Internet information was limited and was travelling slow. Our ancestors depended on channels of information that were often subjected to various policy and regulatory restrictions.

The Internet changed all that. Suddenly everything became possible; everyone had the same opportunities to become a creator or publisher of ideas or a distributor of information. The Internet connected people and their ideas; it has contributed to social empowerment and economic growth. But, have we ever stood long enough to consider what makes the Internet such a special invention? What is it that makes the Internet such a multifaceted tool that never ceases to amaze us with its potential? In a nutshell - it is “permissionless innovation”. Vint Cerf, one of the fathers of the Internet who originally coined the term, has argued that permissionless innovation is responsible for the economic benefits of the Internet. Based on open standards, the Internet gives the opportunity to entrepreneurs, creators and inventors to try new business models without asking permission. But, permissionless innovation is even more than that. It is about experimentation and exploration of the limits of human imagination. It is about allowing people to think, to create, to build, to construct, to structure and to assemble any idea, thought or theory and turn it into the new Google, Facebook, Spotify or Netflix. As Adam Thierer says in his book “Permissionless Innovation: The Continuing Case for Technological Freedom”: “Permissionless innovation is about the creativity of the human mind to run wild in its inherent curiosity and inventiveness. In a word, permissionless innovation is about freedom.” Freedom, not anarchy. Leslie Diagle eloquently places the freedom aspect of permissionless innovation into context in her blog post: “Permissionless innovation: openness, not anarchy”: Permissionless innovation" is not about fomenting disruption outside the bounds of appropriate behaviour; "permissionless" is a guideline for fostering innovation by removing barriers to entry. This makes permissionless innovation is an inseparable part of the Internet. Standards organizations that have witnessed the benefits of permissionless innovation refer to it as “the ability of others to create new things on top of the existing communications structures. Ultimately, all entities are working toward the same goal and developments by one party can aid in the creation of another.” Of course, all this freedom should not be seen isolated from our societal structures. It is freedom that operates within certain boundaries of behavior that trial test the manifestations of permissionless innovation. These boundaries can be normative or the rule of law. But, they kick in after the creator has created and after the inventor has invented in a permission-free environment. And, so they should. Permissionless innovation is not a sign of disorder; it indicates structured order. Imagine a world without Facebook or YouTube. Imagine a world where your creations can be subject to authorization by third parties. Imagine a world where the end result of your product is only part of what you have imagined. Imagine a world where ultimately certain uses of the Internet are prohibited. But, not so long ago, use of the Internet was prohibited. The 1982 MIT handbook for the use of ARPAnet – the precursor of the Internet, instructed the students: “ It is considered illegal to use the ARPAnet for anything which is not in direct support of government business… Sending electronic mail over the ARPAnet for commercial profit or political purposes is both anti-social and illegal. By sending such messages, you can offend people, and it is possible to get MIT in serious trouble with the government agencies which manage the ARPAnet.” Now, instead of “government business” imagine “ANY business”. Permissionless innovation is key to the Internet’s continued development. We should preserve it and not question it. The role of the US government in the administration of the Domain Name System (DNS) dates as far back as in 1998. At the time, various academics, myself included, questioned the role the United States government, through its National Telecommunications and Information Administration (NTIA), was exercising on the DNS. Questions regarding ICANN’s independence dominated much of the discussions also at WSIS and, in particular, at the first Internet Governance Forum (IGF). It was all everyone was talking about.

As Internet governance discussions evolved and matured, so did these questions. The Internet community was still asking but, at the same time, it was engaging in more thorough and considered discussions regarding the critical role the United States was exercising. That role was consistent, predictable and made the Internet run smoothly. The Internet was secure, stable and resilient. It was being established that, especially absent any viable alternative at the time, the US government was doing a very good job. But, questions persisted. That is until 14 March 2014, when the US government, reacting to years of discussion, released a statement, declaring its intention “to transition key Internet domain name functions to the global multistakeholder community”. And, with this sentence, the United States government demonstrated to the entire world that, given the right process and the right ingredients, it is willing to terminate its stewardship role over this part of the Internet’s infrastructure. For many this was long overdue. Under the 1997 Green Paper proposal on the “Improvement of Technical Management of Internet Names and Addresses”, “the US Government would gradually transfer [specific DNS functions] to [ICANN …], with the goal of having [ICANN] carry out operational responsibility by October 1998. Under the Green Paper proposal, the US government would continue to participate in policy oversight until such time as [ICANN] was established and stable, phasing out as soon as possible, but in no event later than September 2000”. Evidently, this deadline was never met. The 1998 MoU between the US government and ICANN was extended and then further extended and then re-extended (under a different name – the Joint Project Agreement or JPA), until it took the form of the Affirmation of Commitments in 2009. Assistant Secretary of Commerce for Communications and Information Lawrence E. Strickling, stated that “the timing is right to start the transition process”, explaining the ‘why now” question and inviting the Internet community to find a workable framework for the evolution of the IANA functions. As parties engage in this process, the US government is requesting that a set of conditions are met. These conditions illustrate a vision of an Internet that continues to thrive as a tool for innovation and creativity, is secure and as inclusive as possible. They should not be underestimated or taken for granted. The first concerns multistakeholderism. By entrusting the multistakeholder model to create a functioning process for that would replace its stewardship role, the US government is demonstrating its integrity and belief to multistakeholder governance. This is quite remarkable. The various stakeholders have now a window of opportunity to engage in substantive discussions and find ways to work alongside through a bottom-up, inclusive, transparent and accountable process. Caution and patience is required; failure of the stakeholders to demonstrate their capacity to build and adhere to a robust process can provide the ammunition to the critics of the model and jeopardize years of discussions. Its spillover effect will be impactful and could extent to the Internet itself. As multistakeholderism becomes more ingrained into the operational aspects of the Internet, it now becomes even more significant to get this right. Naturally, this multistakeholder model will have to evolve and continue to demonstrate its flexibility; it will need to involve everyone and especially those materially-affected parties that are directly related to the various aspects of the Internet’s addressing and naming system. Materially-affected parties become key actors in this process because of their ability to maintain the security, stability and resiliency of the Internet. Their experience in the day-to-day management of protocol parameters, IP numbers and names should be utilized to the full possible extent. They understand and they know what a secure and resilient Internet looks like. They are the trusted parties that have ensured a stable Internet for all these years; they have been majorly accountable. They, too, will have to go through their own re-evaluation and re-thinking; but, they are able to provide answers to emerging questions. As we heard in Singapore, what stable, secure and resilient mean will have to be rethought and clearly explained. What are the tools? Are there pre-existing mechanisms that ensure this? Are there specific principles that need to be applied? Reaching a common and shared understanding to such questions becomes critical. The first step is for them to be addressed in any forum supporting multistakeholder participation. Singapore was the beginning. As they continue to be addressed, new, of course, will emerge. Questions on accountability will be key for this to work. But, it is too early to come up with a conclusive solution. What is required now is continuing the dialogue through a collaborative process. The way I see it (and the NTIA statement instructs) all this work is to ensure the openness of the Internet – openness that has contributed to economic growth, has facilitated the exchange of knowledge, has introduced different ways of social interaction, has empowered people, has addressed issues of poverty and illiteracy and has connected billions of us together. The IANA functions play a critical part in what it means to have an open Internet. Let’s get to work and get this right! Turkey’s recent move to tighten government control of the Internet should again make us think of actions and how they impact the Internet. It is the latest piece in a string of similar decisions made by various governmental and non-governmental actors to use the Internet as a tool to acquire a certain degree of control. But, I think that the belief that anyone is entitled to any form of control of the Internet is quite misguided.

The truth is that the Internet has created a faux sense of entitlement. We see governments increasingly imploring measures to address what they consider vital to preserve their sovereign-based rights. We see something similar with businesses, only they want to preserve market power. But, as it has repeatedly been stated, such actions can have an impact on the Internet. Turkey is just the most recent example. Blocking, filtering and other mechanisms have been constantly employed to control speech or to address intellectual property concerns with the ultimate goal of controlling user behavior. This is precisely what Mr. Erdogan’s government is trying to do with this new Internet law. The new law will allow authorities – deemed appropriate by the Turkish government – without a court order or any other due process, to block websites under the pretense of protecting user privacy and public order. According to the New York Times: “The new law is a transparent effort to prevent social media and other sites from reporting on a corruption scandal that reportedly involves bid-rigging and money laundering.”[1] Turkey’s law is driven by a combination of agendas. It is a political effort by Mr. Erdogan to manage corruption scandals that his government cannot seem to shake off. As the New York Times reported “in one audio recording, leaked last month to SoundCloud, the file-sharing site, Mr. Erdogan is said to be heard talking about easing zoning laws for a construction tycoon in exchange for two villas for his family”.[2] It is also consistent with Turkey’s historical context of being a society that has never managed to become truly democratic. But, what makes Turkey an interesting case is that, over the past few years, industrially it has been thriving and its young population is realizing the benefits of the Internet. Turkey could seize the opportunity to use the Internet both for its economic and democratic evolution. We are only starting to witness the effects of such actions. They are driving users to become increasingly savvier and more demanding. They push for freedom of information. Governments, on the other hand, often consider this freedom ‘unsafe’ and try to manage it. But safety, in the Internet, like security, is a process; it is not a one-off commitment. As Leslie Daigle, discussing security, accurately put it in a blog post: “In my opinion, the big difference the past year's revelations about government surveillance make is a step function change in understanding of credible threats.”[3] For the technical community the process of creating a more secure Internet involves the constant understanding of the network and an adherence to a set of basic principles – transparency, cooperation, accountability and due process. For policy makers, the process should involve questions and loyalty to the same set of principles the technical community operates. And, questions have started being asked. Dutch Member of the European Parliament, Marietje Schaake, said in a blog post: “These new laws strengthen the grip of the Turkish government on what can and cannot be published online and they restrict access to information. Freedom of speech and press freedom are already under a lot of pressure and Turkey is the largest prison for journalists. Since 2007, many websites have been blocked. The European Commission needs to show that the rule of law and fundamental freedoms are at the centre of EU policy. The Gezi Park protests, last summer, have shown that the Turkish people long for freedom and democracy, we must not leave them standing in the cold.”[4] [1] http://www.nytimes.com/2014/02/22/opinion/turkeys-internet-crackdown.html?_r=0 [2] Id. [3]http://www.circleid.com/posts/20140218_mind_the_step_function_are_we_really_less_secure_than_a_year_ago/ [4] http://www.marietjeschaake.eu/2014/02/mep-turkish-internet-censorship-violates-eu-rules/ For many years, I have observed that the Internet is adopting many self-regulation frameworks to address a variety of issues. Indeed the Internet has benefited from self-regulation as an efficient way to address jurisdictional conflicts - particularly as compared to traditional law making. Since the Internet is global, jurisdiction is often the most difficult policy issue to address. To this end, voluntary initiatives are becoming increasingly popular in the digital space due to their ability to address dynamically issues related to the Internet. Voluntary, self-regulatory or industry-based are all terms used to identify initiatives that are produced and enforced by independent (private) bodies or trade associations and focus on addressing issues that have a limited scope and are of a specific subject matter.

The United States Patent and Trademark Office (USPTO) recently issued a request for input on voluntary best practices[1] in the context of intellectual property. In light of this request and considering the newly formed Copyright Alert System (CAS) and other similar policy exercises around the world, the Internet Society offers its own reflections on voluntary policy initiatives. By outlining the advantages and disadvantages of self-regulation and identifying a set of best practices for self-regulation that include the need for periodic reviews, external and internal checks and, transparency, amongst others, the Internet Society wishes to promote the thesis that voluntary-based initiatives can prove efficient if they are carefully balanced and do not depart from the established principles and processes of rulemaking. The scale of Internet growth is reflected in the shear complexity of crafting Internet policies and regulations; it is an undisputable fact that the Internet has put to test the efficiency of traditional law making and its ability to deal with emerging technology trends. In order to deal with the increasing gap between legal and technology frameworks, many policy makers turn to systems of self-regulation and voluntarism. These systems are not new – since lex mercatoria (law of the merchant) in the middle Ages, self-regulation has been a tool for regulators and policy makers to deal with complex commercial issues in an expedient manner. Self-regulation mechanisms can be efficient and offer a plethora advantages, but they should not be considered a panacea. A clear rationale regarding their mandate and parameters is essential for self-regulation frameworks to address their intended purpose and stand the test of time. At the same time, tools for measuring the effectiveness of these approaches should also be in place to ensure that the outcomes are consistent with expectations and that they continue to meet the public interest over time. Since the Internet is a global network of networks, national Internet public policies, whether they are based on self-regulation or traditional regulation, often have impacts beyond national borders. Thus, as voluntary-based mechanisms gain traction amongst policymakers as an alternative way to address complex online legal issues, these mechanisms will also have global implications. This is particularly true because, while self-regulatory tools are evolving in different jurisdictions, there are lessons to be learned across all of these experiences. Further, there are global policy lessons to be learned in terms of the effectiveness, processes and sustainability of these kinds of policy tools. What follows is a set of thoughts concerning self-regulation. Self-regulatory frameworks are appealing because they can be narrowly tailored to deal with specific legal issues but these tools are not the solution to all problems. In fact, successful self-regulation can only happen within established and legitimate frameworks of rule-making. Advantages and Disadvantages of Voluntary-based Initiatives The Internet Society is, generally, in favor of industry-based initiatives to address various issues, including those related to intellectual property; however, we are also mindful of the risks associated with these approaches. Whether based on theories of delegation or contract law, facilitated by the State or being a by-product of a “self-enforcing power, stemming from the direct deprivation of a valuable right”, the role of private bodies in self-regulatory environments is key.[2] At the outset, such private entities could prove beneficial in overseeing market participants’ actions through different processes such as standard setting, certification, monitoring, brand approval, warranties, product evaluation and arbitration. Academic literature and market practices (e.g. the European Advertising Standards Alliance in Europe) indicate that for self-regulatory mechanisms to be successful they should include standards for real consent, which help ensure the legality and legitimacy of contractual agreements as part of private regulation. In cases where consent is not present, public legal institutions are required to specify the criteria that entitle private regulatory regimes to acquiescence and immunity. But, ultimately, it is adherence to minimum standards of justice and fairness that determine the success of industry-based initiatives. Rules, consequential to private regulatory efforts should ensure that – to the extent possible – interested and affected parties are able to participate in voluntary based initiatives on an equal footing. Based on this set of minimum standards, private regulation offers some notable advantages in allowing the market to take the lead, offer a multitude of alternatives and ensure that fundamental values are protected by allowing interested parties to participate in the formation of rules and principles that are not subject to the cumbersome processes of traditional law making. As David Post, professor at Temple University and Fellow at the Center for Democracy and Technology, accurately put it: “We don’t need a plan but a multitude of plans from among which individuals can choose, and the market [...] is most likely to bring that plenitude to us”.[3] In a much similar vein, Robert Pitofsky, former Chairman of the Federal Trade Commission (FTC), referring to industry-led regulation, enumerated the following advantages: · Self-regulatory groups may establish efficient product standards; · Private standard setting can lower the cost of production; · Private regulation helps consumers evaluate products and services; · Self-regulation may deter conduct that is universally considered undesirable but outside the purview of civil or criminal law; and, · Self-regulation is more prompt, flexible and effective than government regulation. Industry regulation, however, also has significant disadvantages. The recent failure of self-regulatory models in the financial markets leads many to question industry-based regulation as an efficient model. To this end, some scholars have challenged the legitimacy of private bodies, such as cyber-authorities, to deal with issues emanating from the Internet. Their main concern relates to the ability of such authorities to create policy and enforce rules that traditionally fall within the remit of the democratic state. In the words of a US scholar: "State-centered law - both legislation and constitutional adjudication - carries considerable weight in legitimizing creation beliefs and practices and delegitimizing others. […] A cyberauthority, in contrast, would have to start from scratch". [4] One of the most worrying aspects of private regulation is, arguably, that many of its advantages are based on false premises and loose criteria. Amongst other things, private regulation may easily fail to protect democratic values; it can neglect basic standards of justice; and, it is often less accountable compared to traditional governmental rule making. More importantly, because of the Internet, self-regulation is increasingly initiated and imposed by new Internet sovereigns that do not necessarily operate within traditional principles of rule making. To this end, private regulation often suffers from lack of accountability and due process. .[5] Effectiveness of Voluntary Initiatives Various countries, including the United States and the United Kingdom, have consistently supported meaningful, consumer-friendly, self-regulatory regimes for various issues ranging from privacy to intellectual property. As the United States government has stated: “To be meaningful, self-regulation must do more than articulate broad policies and guidelines”.[6] The Internet Society fully agrees with this premise – self-regulation emanating from voluntary based initiatives should extend to incorporate specific and reliable principles that allow participants and consumers/users to have a clear understanding regarding the delineation of the parameters, the scope of self-regulation and the accountability mechanisms for the public interest. We will approach the effectiveness of self-regulation from the perspective of a) Accountable and Transparent Information Practices; and, b) Characteristics of Effectiveness. A) Accountable and Transparent Practices 1. Access: At a minimum, users need to be provided with the option of having access to information regarding every voluntary-based mechanism that might affect them and their online experience. In this respect, every actor engaged in voluntary practices should take reasonable steps to ensure that users are kept updated and informed about the process and substance of such self-regulatory initiatives. 2. Enforcement Policies: Enforcement policies articulate the steps that will be taken when illegal action is detected. On this basis, users should be able to understand the scope of enforcement and the parameters of their activity. 3. Notification: Enforcement policies, especially those emanating from self-regulatory schemes, should be made known to users as much in advance as possible. Notification written in language that is clear and easily understood, should be displayed prominently, and should be made available before users are asked to sign any contract regarding their Internet connection. 4. Education: Two things are important in this context: first, education should reflect unbiased opinions and should be conducted by 3rd party trusted sources, including academia. Similarly, education should not be limited only to users but should extent to every single entity or individual who is part of the Internet ecosystem. 5. Data Security: Given the volume of data collected in such industry-based schemes, private bodies creating, maintaining, using or disseminating records of identifiable personal information must take reasonable measures to assure its reliability and take reasonable precautions to protect it from loss, misuse, alteration or destruction. B. Characteristics of Effectiveness For a self-regulatory regime to be effective, it needs to include mechanisms that assure compliance with its rules and appropriate recourse to an injured party when rules are not followed. 1. Due process: every voluntary-based initiative should adhere to basic and fundamental principles of justice and fairness, including, but not limited to, the right of a hearing, legal certainty and adherence to the rule of law. 2. Judicial safeguards: all voluntary-based initiatives should encompass internal and external checks and balances. One such balance is the right of an appeal. However, this right is not self-sufficient and should be accompanied by a process that is affordable and accessible; it should further incorporate rules that are clear and incentivize its use. Finally, it also requires independence and impartiality of all the participants. 3. Transparency: Disclosure of information to the public about voluntary schemes is another significant feature of voluntary-based initiatives. This information should include, but should not be limited to, the system’s rationale, end goal, how it affects interested parties, etc. 4. Balanced and proportionate rules: Voluntary based mechanisms should strive towards creating rules that are balanced, reflect the rule of law and are proportionate. 5. Trust: Trust is becoming increasingly important in the spheres of policymaking and law crafting. Any voluntary-driven initiative should seek to build and create an environment of mutual trust first, amongst the actors setting up the system, but also between the actors to which the system is addressed. 6. Periodic reviews: All systems, including public ones, should be periodically reviewed and evaluated as to their effectiveness. Such reviews test the efficacy of policy mechanisms and their ability to provide answers to the issues they were originally created to address. In the context of the United States’ Copyright Alert System (CAS), for instance, the need for a robust review after its first year of operation is key in identifying potential gaps and omissions, a possible revision of its safeguards, a reframing of its deliverables and the precise role of the various actors. 7. Public Interest: To the extent that self-regulation aims at setting standards that principally reflect industry needs, there is a potential for the standards to reflect the industry’s interests rather than the public interest. It is, therefore, essential that self-regulation is neither collusive nor open-ended; it should not operate outside the wider regulatory framework or act independently. In such instances, the role of the government and public interest groups can aid in a monitoring function and lessen the opportunity for abuse and opportunistic changes to the self-regulatory mechanism. Conclusion Voluntary-based initiatives can prove valuable tools in the complex environment of policy making. Unlike public regulation, which is increasingly being seen as too slow to address the needs of a fast-paced Internet environment, self-regulation can provide efficient answers to important legal questions. But, self-regulation should not be seen as a cure for all the issues appearing in cyberspace. It is important that mechanisms based on industry initiatives include very specific and solid provisions relating to due process, fairness and justice; in addition, periodic review mechanisms as well as internal and external checks, including the right of an appeal, should also be parts of voluntary-based initiatives. To this end, a careful balance regarding goals and scope is necessary in order to ensure that self-regulation does not become a vehicle of abuse or misuse. [1] https://www.federalregister.gov/articles/2013/06/20/2013-14702/request-of-the-united-states-patent-and-trademark-office-for-public-comments-voluntary-best [2] Henry H. Perritt, Jr., Towards a Hybrid Regulatory Scheme for the Internet, 2001 U. Chi. Legal F. 215 [3] David G. Post, What Larry Doesn't Get: Code, Law, and Liberty in Cyberspace, 52 Stan L Rev 1439, 1458 (2000) [4] Neil Weinstock Netanel, Cyberspace Self-Governance: A Skeptical View from Liberal Democratic Theory, 88 Cal L Rev 395, 497-98 (2000) [5] Henry H. Perritt, Jr., Towards a Hybrid Regulatory Scheme for the Internet, 2001 U. Chi. Legal F. 215 [6] http://www.ntia.doc.gov/legacy/ntiahome/privacy/6_5_98FEDREG.htm This blog post originally appeared at the Internet Society Public Policy page. What made an organization like the Internet Society draft an issues paper on Intellectual Property? What is the aim of this paper? How does the paper relate to overall Internet governance discussions? And, what – if any – impact does it aim to have on the discussions regarding Intellectual Property?

At a time when there is a desire to resolve policy considerations by employing technological measures, the Internet Society, through an issues paper, amongst other things, seeks to chart a path forward: for the Internet Society, it is vital that policy makers develop public policy approaches that are consistent with the principles that have demonstrably worked. For instance, intellectual property enforcement solutions should not be at odds with the underlying architecture of the Internet -- technology can assist intellectual property rights in other ways (e.g. identification of the intent of the content creator), but enforcement is not one of them. The Internet is a unique tool for economic and social empowerment and we should ensure that it continues to perform this significant role. However, some policy initiatives over the last 18-24 months (SOPA/PIPA and ACTA) resulted in a highly publicized and deep schism between policy, technology and the various stakeholders. To this end, the Internet Society believes that it is important to articulate a set of minimum standards for all intellectual property discussions. Multistakeholder participation and inclusion, transparency, the rule of law, respect for the Internet’s architecture and upholding the open standards of the Internet, constitute the types of propositions that should be established in intellectual property governance. Fundamentally, the underlying premise of this paper is neither novel nor new. It is written with the intention to communicate and compile existing ideas that could contribute to the ongoing broad discussions relating to: a) the effect the Internet has on intellectual property rights and, b) the place intellectual property rights should occupy within the Internet ecosystem. Reflecting on the Intellectual Property discussions thus far, we appear to be lacking such minimum propositions that could help provide a framework for how intellectual property interactions are to be structured, shaped or fashioned. We lack a set of best practices that could provoke forward-looking approaches for how to address this highly contested issue more effectively. One of the first things we observe is that the realm of intellectual property remains one of the few thematic Internet governance areas that still lacks inclusive structures for stakeholder engagement. This is not to say that multistakeholder discussions relating to intellectual property are not taking place; but such procedural formats are not yet the primary mechanism for discussing intellectual property matters and their potential impact on the Internet. So, although we acknowledge that there is a conscious effort from some stakeholders to end the policy schism and urge the reconciliation of intellectual property with technology, the lack of overall inclusiveness, precludes the emergence of a robust and sustainable way forward. None of this, of course, is new and the Internet Society’s issues paper does not seek to reinvent the wheel. What it seeks to do, however, is to reflect on the many considerations as they have developed from years of policy making and Internet governance processes. It is through these considerations that the Internet community will much better serve the need to promote the open development and use of the Internet for the benefit of all people throughout the world. So, the time is right to reflect and strategize on how to strengthen the dialogue through inclusiveness, transparent processes, adherence to the rule of law and respect of the Internet’s architectural design when talking about intellectual property on the Internet. You can access the paper here! Konstantinos Komaitis Policy Advisor for the Internet Society Note: This blog post originally appeared on the Internet Society Public Policy page Discussions at annual meeting of the Internet Corporation for Assigned Names and Numbers (ICANN) in Costa Rica have been significantly dominated by the requests submitted by the International Olympic Committee (IOC) and the Red Cross and Red Crescent movement regarding the special protection of their names at the top-level domain name. This issue has actually been at the ICANN’s agenda for quite some time now, but it reached its pinnacle yesterday (March 14, 2012), when, at the request of the Non-Commercial Stakeholder Group (NCSG) the issue was deferred, a move which meant that the Generic Names Supporting Organization (GNSO) Council was unable to vote on this issue. For many, this move signaled the end of ICANN, as we know it, an apocalyptic end to the Internet’s biggest investment – the new gTLD program.

Being present at ICANN 43 and a member of NCSG and of the Drafting Team that has debated on this issue, I feel the need to clarify some things. First of all, the world is not going to come to an end and the new gTLD program is not in jeopardy. It would be outrageous to even suggest that a process, involving a debate of more than six (6) years is dependent upon granting these special protections. This scenario would send a bad signal to the rest of the Internet world and its institutions as to where ICANN’s true priorities lie. And, the world is watching! But, more importantly, one thing that needs to be made clear is that both these organizations are already ‘specially’ protected in this first round of the new gTLDs. According to the latest version of the Applicant Guidebook, the terms of the International Olympic Committee and the Red Cross and Red Crescent Movement “are prohibited from delegation as gTLDs in the initial application round”. This is clear. These terms are untouched and have been elevated to a completely different status, in comparison to those of other organizations, intergovernmental or not, that one can argue have a more significant mission, at least compared to the one of the International Olympic Committee. (Think here of UNESCO, WIPO, etc.) Yesterday, the debate, however, was not about substance – it was about process. The reason NCSG requested the deferral was not about whether these organizations deserve these protections; the reason was simple: the public comment period for the Drafting Team’s recommendations is not over and, thus, the GNSO cannot come to a decision unless the public comment period has expired. It is actually surprising that the GNSO did not feel the need to uphold the public comment period, an issue that constitutes a paramount element within ICANN’s processes and is part of its Affirmation of Commitments mandate. Under the Affirmation of Commitments, the document that establishes ICANN’s bottom-up and transparent model, “ICANN commits to maintain and improve robust mechanisms for public input, accountability, and transparency so as to ensure that the outcomes of its decision-making will reflect the public interest and be accountable to all stakeholders”. In particular, ICANN is to achieve these set goals by “continually assessing and improving the processes by which ICANN receives public input (including adequate explanation of decisions taken and the rationale thereof)”. So, questioning the need for the public comment period to make its full circle by some members of the GNSO Council is what puts ICANN and its processes in danger; it is not the deferral, which is aligned with these very principles. Plato famously said: “a good decision is based on knowledge and not on numbers”. For ICANN, this knowledge derives from public comments – public comments constitute the only way for ICANN to understand and learn the views of the wider community. So, the idea that we can circumvent such a pivotal process within the ICANN ecosystem and sacrifice due process in the name of speed is not only dangerous but it also sends a very bad message as to the democratic fractions that are supposed to be part of ICANN’s multistakeholder model. Comments submitted by Dr. Konstantinos Komaitis regarding the “Proposals for protection of International Olympic Committee and Red Cross/Red Crescent names at the top-level”

I would like to thank the Internet Corporation for Assigned Names and Numbers (ICANN) for this opportunity to submit comments in relation to the “Proposals for protection of International Olympic Committee and Red Cross/Red Crescent names at the top-level” domain names. First of all, I would like to mention that I am the current chair of ICANN’s Non-Commercial Users Constituency (NCUC) and one of the members of the Drafting Team (DT) that has submitted these recommendations for consideration by the wider Internet Community. In this particular instance, however, I am speaking in my own personal capacity as an academic and a Greek citizen. My concerns over these recommendations relate to issues of process, substance and effectiveness. In particular, I feel that this whole process takes a path that goes contrary to the idea of the bottom-up normative assessment the ICANN community has strived to develop over the years and opens a Pandora’s Box with ramifications that will be impossible to reverse. The primary flaw of this process that led to these proposals is that it has failed to distinguish between the requests made by the International Olympic Committee (IOC) and the Red Cross/Red Crescent movement and treat them as two separate issues. These are two organizations, which engage in completely different and unrelated activities, are currently being offered different levels of protection through traditional international and national legal instruments and their contribution to society differs significantly. In particular, the fact that the Red Cross/Red Crescent movement is involved in promoting and ensuring humanitarian relief in times of national and international catastrophes offers, at a preliminary level, a more sound foundation for the potential protection of its names and terms in the Domain Name Space (DNS); on the contrary, IOC is an organization, which receives a great amount of sponsorship deals which ensures “more than 40% of Olympic revenues”[1] (some of its commercial partners include SAMSUNG, COCA COLA, GENERAL ELECTRIC (GE) MCDONALDS, VISA and PANASONIC) and its role, albeit significance within the sports industry, should not be mixed with humanitarian or public interest values. On the issue of process, it has been obvious that ICANN departed significantly from its long-fought and established bottom-up processes. ICANN’s Board decision to prohibit the “delegation [of these names] as gTLDs in the initial application round”[2] went against the bottom-up establishment within ICANN and undermined its main policy multistakeholder body – the Generic Names Supporting Organization (GNSO) Council. (At this stage, it is important to clarify that a decision has already been made concerning the protection of these terms in the first round). This new set of recommendations seek to go beyond and re-enforce the Board’s decision by creating a panoply of various protections and safeguards that, one can argue, re-interpret international law. What is even worse is the unreasonable pressure that has been placed upon the Drafting Team to come up with these recommendations, which is manifested by the rush and the urgency of this public comment period and the likelihood that the GNSO Council may be asked to vote on this recommendation during the 43rd ICANN meeting in Costa Rica and only a week after the public comment period has opened. This means that the GNSO, when making its decision, will, most likely, not have the appropriate input of the community, within and outside ICANN; this is something that can potentially undermine any of its future work. On the issue of substance the recommendation of the Drafting Team enters a dangerous territory. Under recommendation 1 - “Treat the terms set forth in Section 2.2.1.2.3 as “Modified Reserved Names” – terms like ‘confusingly similar’ are vague, thus their meaning can easily be twisted, whilst there is also an obvious attempt to disincentivize even legitimate rights holders from engaging in any type of registration at the top-level name [paragraph c (ii) 3 of recommendation 1]. Even more problematic is recommendation 2, which seeks to re-interpret international Treaties and expand the rights traditionally afforded for these terms. This is particularly obvious in the case of the Olympic mark, which seeks to protect the names in multiple languages, including those of States that have not signed the Nairobi Treaty on the Protection of the Olympic Symbol. The Nairobi Treaty is the only standard that can be used by an international organization like ICANN in order to comply with the rule of law. ICANN is not a legislator and should not accept a ‘definitive list’ of languages that constitute an arbitrary compilation of national laws. Finally, there is no clear justification regarding recommendation 3. Considering the novelty, the time constraints and the controversial nature of these recommendations, in the likelihood that these recommendations pass, ICANN should call for a review after the first round of delegation of the new gTLDs has occurred in an attempt to reassess them. Considering effectiveness, these recommendations set a very dangerous precedent and send a bad message. Although reassurances have been made that this process is meant to address only the names of these two international bodies, it is the case that, should they be implemented, other international entities and institutions will have valid claims to demand the same levels of protection.[3] If pressure from these other international bodies intensifies, ICANN will have no option but to succumb. Accepting these recommendations leaves the ICANN community with no grounds against other international organizations and sets a dangerously flawed practice for the new gTLD program. Being a Greek citizen, I am particularly troubled by the levels of protection these recommendations seek to provide to the terms ‘OLYMPIC’, ‘OLYMPIAD’ and their variations in multiple languages. Greece is the place that gave birth to the Olympic games and promoted the Olympic spirit that the world currently enjoys. The idea that the Greek community of Olympia (the place which marks the ceremony of the lighting of the Olympic flame) will have to ask permission from the International Olympic Committee to use a term that is part of its cultural heritage is highly problematic, illegitimate and goes against how the Applicant Guidebook views communities. I hope the ICANN community takes a much closer look to these recommendations and think carefully about the potential multifaceted impact they may have. Respectfully submitted, Dr. Konstantinos Komaitis, Senior Lecturer in Law [1] http://www.olympic.org/sponsorship [2] 2.2.1.2.3 of the Applicant Guidebook [3] http://www.komaitis.org/1/post/2011/12/we-dont-accept-any-more-reservations-icann-pressured-to-reserve-names.html |

|